What is this thing called “impact”? More specifically, what are we talking about when we speak of the non-academic impact of research? In this post I want to explore some of the possible answers to this question, which I’ve been looking at as part of a forthcoming working paper on the ESRC STEPS Centre’s approach to impact. I also want to step back to ask a related, but wider question: how does the idea of impact affect the way that researchers and communicators think about how change happens?

Impact is a deceptively simple word for a complex beast. Demands on researchers to plan and report on the wider social effects of their work are not new, but they have grown more intense in recent years in response to various processes, including the Research Excellence Framework (REF – due 2014) and the requirements of funders (DFID and ESRC, the ones I’m most acquainted with, both frame impact in slightly different ways).

The imperative to seek funding, to justify what researchers do, and to show the relevance of research to the ‘real world’, as opposed to the increasingly negative view of academics sitting in ivory towers: all these add weight to the idea of impact. But as the pressure to predict, plan and measure impact grows, it meets head on the challenge of attributing social change or benefit to a programme or piece of research. Perhaps without our even realising it, impact also displaces other ways of thinking about how change happens in relation to research.

Definitions

The word ‘impact’ came into use in a figurative sense to mean ‘forceful impression’ two centuries ago. It implies movement, collision and the exertion of force: more metaphorically solid than influence, effect and benefit (though these are words often used with or in place of ‘impact’).

But it’s problematic too. Even though we know it’s just a metaphor, ‘impact’ creates an image that’s at odds with how knowledge interacts with, say, public policy, which is a complex, iterative process with many potential causes and drivers of change. So a great deal of effort has been spent in developing nuanced understandings of impact, while avoiding defining it too narrowly, so it can be used for a variety of disciplines and contexts.

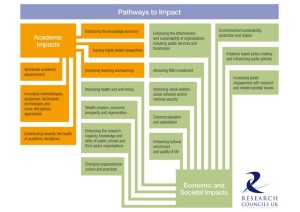

RCUK’s definition, for example, talks about “fosterin g global performance”, increasing effectiveness of public services and policy, and enhancing quality of life. The RCUK typology diagram (pdf) lists 12 types of non-academic ‘pathways to impact’, including environmental sustainability and increasing public engagement with research among others.

g global performance”, increasing effectiveness of public services and policy, and enhancing quality of life. The RCUK typology diagram (pdf) lists 12 types of non-academic ‘pathways to impact’, including environmental sustainability and increasing public engagement with research among others.

The ESRC breaks down impact into the three categories of instrumental, conceptual and capacity building. DFID talks about ‘research uptake’, which emphasises the use of research evidence; and makes a difference between ‘outcomes’ and ‘impacts’.

The REF, in its guidance for 2014, defines impact as “[including], but …not limited to, an effect on, change or benefit to:

- the activity, attitude, awareness, behaviour, capacity, opportunity, performance, policy, practice, process or understanding

- of an audience, beneficiary, community, constituency, organisation or individuals

- in any geographic location whether locally, regionally, nationally or internationally.”

I haven’t mentioned a key common factor in the guidance from these organisations. They all, to some extent, acknowledge that research relates to society in complex, non-linear ways. They also stress the contributory nature of impact. For example, the ESRC views impact “in the context of complex non-linear policy and practice development processes, where research is only one of many influencing factors” (pdf source here).

Diversity

There is no consensus on what impact is, and the framings of it are diverse. An analogy springs to mind: the Castle of Kafka’s novel of that title, in which the hapless protagonist aims to gain access to a mysterious castle, approaches it by many pathways, but never succeeds in reaching it.

To deal with the problem of attributing impact, impact has also been conceptualised as a journey with various stages (as discussed by Exeter’s DESCRIBE project (report here in pdf), which reviewed various concepts and definitions of impact). The LSE Public Policy Group’s Handbook on impact for social scientists expresses impacts as primary or secondary, where “primary impacts” are “brute facts” that can be recorded and observed; and “secondary impacts” are more subjective – for example, a desired or undesired change in policy. Another approach uses the term ‘productive interactions’ to describe encounters between researchers and publics, and ‘impacts’ where these interactions result in new or different behaviours.

The diversity of framings of impact certainly reflects the diversity of types of research that are out there. But I think it also reflects the difficulty of capturing how research interacts with society.

“Impact” has become the dominant way of thinking and talking about what research does for society in certain contexts – namely, when justifying and deciding on how to fund research and how to communicate about it.

I don’t think that this dominance means that the idea of impact is wrong or useless. My experience with the STEPS Centre is that thinking about impact has helped us plan how to engage most effectively with different audiences in diverse settings. It has provided one important way for us to look at how we interact with audiences and what the consequences might be, and a way of reporting on our activities to our funders and our stakeholders. Thinking about impact has also provoked us to think more strategically about communications, and about political opportunities and sensitivities where we operate.

Challenging impact

But all of this doesn’t stop us from recognising that there are other ways of thinking about how research and society interact.

The ideas that social science produces can change society itself, so society could also be said to have an ‘impact’ on research as much as the other way round. Social and political change is complex and unexpected things happen; research is one part of a constantly-changing puzzle, not separate from it. Research of any kind stands on the shoulders of what has gone before – or seeks to break it down and build something new. The academic voice is only one of many voices in a conversation about how the world might look and how it should change.

Social science is also about showing “where the power networks lie – pun intended” as Emma Uprichard recently wrote. Sheila Jasanoff, writing about sociology of scientific knowledge (SSK) in 1996, says that “to destabilise the dominant stories… is a political enterprise” and that the project of science and technology studies is to “render more visible the connections and the unseen patterns that modern societies have taken pains to conceal”. Challenging power, and the uses of certain kinds of knowledge in reinforcing or hiding it, means looking critically at some of the ways that the idea of impact might be used in the service of power.

Reconciling impact with our understanding of complexity, power and politics, the way knowledge is shaped by different groups and the way it shapes us, is never going to be straightforward. I don’t know whether it is possible, or even desirable, to develop ever-more nuanced and qualified definitions of impact to address this problem. Perhaps one way forward is to acknowledge that it is an imperfect system and to use it as wisely as possible – as one tool among others for looking at how research fits into the bigger picture.

Photo: Hyundai i40 by touring_club on Flickr (creative commons)